The practical 'human in the loop' framework

Download the PDF: human-in-the-loop-framework.pdf

“Human in the loop”.

I hear and this phrase dozens of times per week. In LinkedIn posts. In board meetings about AI strategy. In product requirements. In compliance documents that tick the “responsible AI” box. It’s become the go-to phrase for any situation where humans interact with AI decisions.

But there’s a story I think of when I hear “human in the loop” which makes me think we’re grossly over-simplifying things. It’s a story about the man who saved the world.

The man who saved the world

September 26, 1983. The height of the Cold War. Lieutenant Colonel Stanislav Petrov was the duty officer at a secret Soviet bunker, monitoring early warning satellites. His job was simple: if computers detected incoming American missiles, report it immediately so the USSR could launch its counterattack.

12:15 AM… the unthinkable. Every alarm in the facility started screaming. The screens showed five US ballistic missiles, 28 minutes from impact. Confidence level: 100%. Petrov had minutes to decide whether to trigger a chain reaction that would start nuclear war and could very well end civilisation as we knew it.

He was the “human in the loop” in the most literal, terrifying sense.

Everything told him to follow protocol. His training. His commanders. The computers.

But something felt wrong. His intuition, built from years of intelligence work, whispered that this didn’t match what he knew about US strategic thinking.

Against every protocol, against the screaming certainty of technology, he pressed the button marked “false alarm”.

Twenty-three minutes of gripping fear passed before ground radar confirmed: no missiles. The system had mistaken a rare alignment of sunlight on high-altitude clouds for incoming warheads.

His decision to break the loop prevented nuclear war.

Beyond “human in the loop”

What made Petrov effective wasn’t just being “in the loop” - it was having genuine authority, time to think, and understanding the bigger picture well enough to question the system.

Most of today’s “human in the loop” implementations have none of these qualities.

Instead, we see job applications rejected by algorithms before recruiters ever see promising candidates. Customer service bots that frustrate instead of giving agents the context to actually solve problems. AI systems sold as human replacements when they should be human amplifiers.

A practical framework

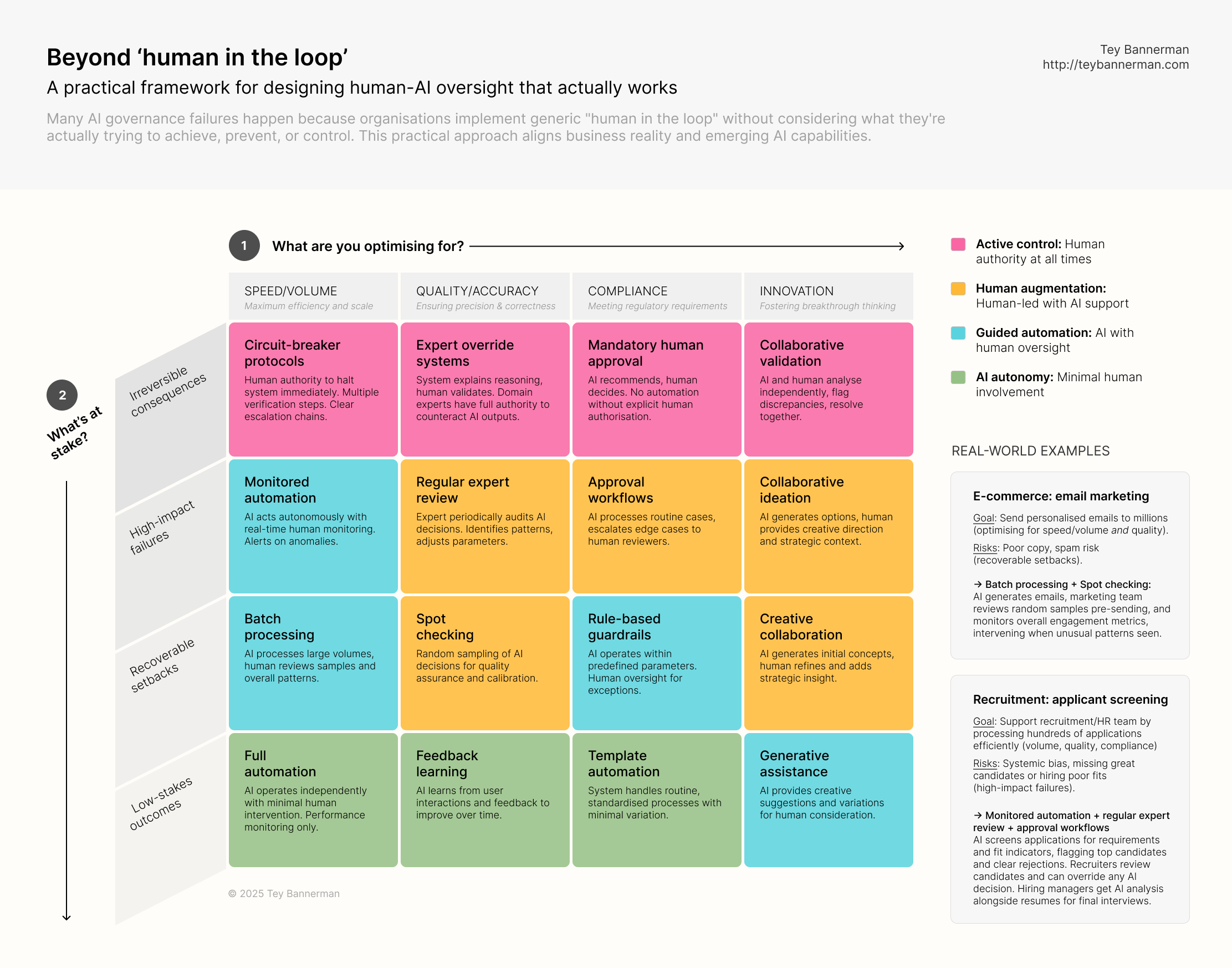

I’m sharing here the framework I use with organisations building AI systems. It starts with two practical questions every leader can answer: what are you optimising for, and what’s at stake?

I synthesise this into 16 different research-backed approaches - from “circuit breaker protocols” for irreversible decisions to “feedback learning” for low-stakes automation. Each designed around giving humans the authority, time, and understanding they need to be genuinely effective - but also very cognizant of AI’s potential to automate routine tasks and perform consistently with the right guardrails in place.

The goal isn’t perfect categorisation but moving beyond generic “human in the loop” to build the the systems we actually intend, not the ones we accidentally create.

-

Also published on LinkedIn in August 2025.